The Smartest Tool in History Is Making Our Kids Less Intelligent

We need to teach children to interrogate everything. Not with anxiety, but with curiosity. To hold up a piece of information and turn it slowly in the light. To understand that a deepfake isn’t just a technical trick: it’s a weapon, and someone made it for a reason.

To ask not just ‘what does this say?’ but ‘what does this want from me?’

And we need to model this for them.

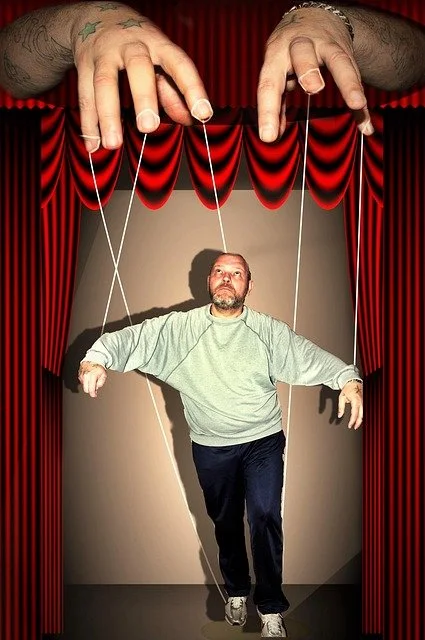

Is human thought being absorbed by AI?

If the reasoning process becomes opaque even to those who are responsible for its consequences, accountability shifts from something lived to something theoretical.

Leaders may still sign off decisions, yet their capacity to interrogate the underlying thinking diminishes if they have no experiential sense of how that thinking was formed.

Are we building intelligence, or outsourcing it?

If we don’t actively teach people how to question, how to verify, how to tolerate being wrong, and how to think independently of algorithms, we risk creating a society that functions efficiently but thinks very little.

And that’s the quiet fear running through all of these comments. Not that AI will become too intelligent. But that we’ll stop becoming intelligent ourselves.